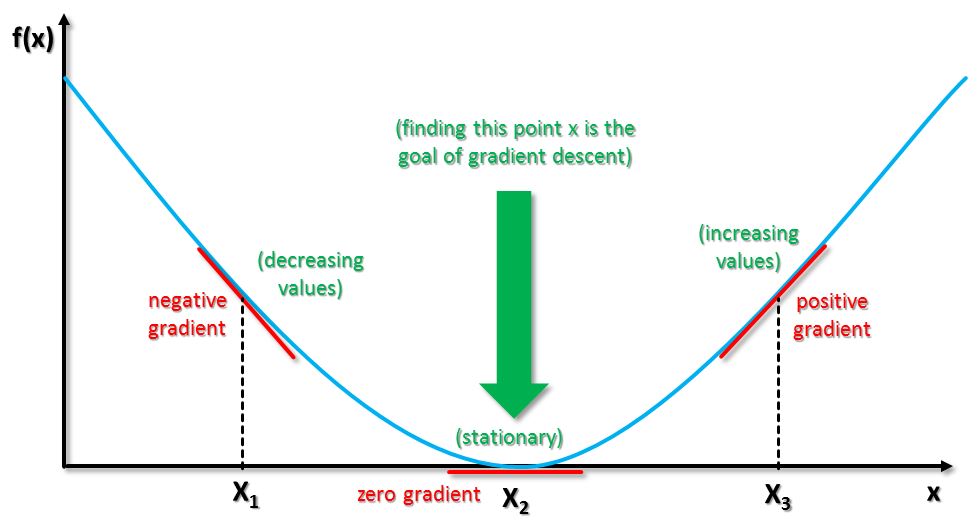

The gradient descent varies in terms of the number of training patterns used to calculate errors. The procedure repeats until the cost of the coefficients is as close to 0.0 as possible. A specified learning rate parameter (alpha) controls how much the coefficients can change on each update, also known as the step or learning rate. The slope indicates the direction to move the coefficient values to reveal a lower cost on the next iteration. The process begins by choosing a derivative or slope of the function at a given point. The data scientist evaluates the cost of the coefficient by using the descent gradient function. The descent procedure starts with initial values for the coefficient or coefficients of the function. Gradient descent also can be much cheaper and faster to find a solution. Gradient descent is most appropriately used when the parameters can’t reach an accurate conclusion through linear calculation and the target must be searched for by an optimization algorithm. A low learning rate will be more precise but time-consuming to run on large datasets. Larger steps allow for a higher learning rate but may be less precise. The size of the steps is known as the learning rate. Each time the algorithm is run, it moves step-by-step in the direction of the steepest descent, defined by the negative of the gradient. The term gradient descent refers to the changes to the model that move it along a slope or gradient in a graph toward the lowest possible error value. The goal is to find the model parameters that minimize the loss of data while ensuring it’s accurate. This helps to reduce errors in future tests or when it’s live.įor linear regression, the parameter is the coefficient, while in a neural network, the parameter is the weight. The algorithm is typically run first with training data, and errors on the predictions are used to update the parameters of a model. Statistics Concepts for Data Scientistsĭata scientists implement a gradient descent algorithm in machine learning to minimize a cost function.Home / Learning / Machine Learning Algorithms / Gradient Descent What is Data Visualization? expand_more.Machine Learning Algorithms expand_more.Statistics Concepts for Data Scientists expand_more.What Can You Do With a Computer Science Degree?.

How to Become a Business Analyst With No Experience.Best Master’s in Information Systems expand_more.Online Master’s in Cybersecurity expand_more.Online Master’s in Computer Engineering.Best Master’s in Business Analytics expand_more.Master’s in Data Science Programs in Washington, D.C.Master’s in Data Science Programs in Texas.Master’s in Data Science Programs in Ohio.Master’s in Data Science Programs in New York.Master’s in Data Science Programs in Colorado.Master’s in Data Science Programs in California.Best Master’s in Data Science expand_more.Generally \gamma is set to 0.5 until the initial learning stabilizes and then is increased to 0.9 or higher. Finally \gamma \in (0,1] determines for how many iterations the previous gradients are incorporated into the current update. The learning rate \alpha is as described above, although when using momentum \alpha may need to be smaller since the magnitude of the gradient will be larger. In the above equation v is the current velocity vector which is of the same dimension as the parameter vector \theta. \theta = \theta - \alpha \nabla_\theta J(\theta x^) \\ Stochastic Gradient Descent (SGD) simply does away with the expectation in the update and computes the gradient of the parameters using only a single or a few training examples. Where the expectation in the above equation is approximated by evaluating the cost and gradient over the full training set. The standard gradient descent algorithm updates the parameters \theta of the objective J(\theta) as, SGD can overcome this cost and still lead to fast convergence. The use of SGD In the neural network setting is motivated by the high cost of running back propagation over the full training set. Stochastic Gradient Descent (SGD) addresses both of these issues by following the negative gradient of the objective after seeing only a single or a few training examples. Another issue with batch optimization methods is that they don’t give an easy way to incorporate new data in an ‘online’ setting. However, often in practice computing the cost and gradient for the entire training set can be very slow and sometimes intractable on a single machine if the dataset is too big to fit in main memory. minFunc) because they have very few hyper-parameters to tune. They are also straight forward to get working provided a good off the shelf implementation (e.g. Batch methods, such as limited memory BFGS, which use the full training set to compute the next update to parameters at each iteration tend to converge very well to local optima.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed